A bag but is language nothing of words

From Mondothèque

(language is nothing but a bag of words)

WARNING: THIS TEXT IS STILL IN DRAFT FORM AND NEEDS FURTHER EDITING

Contents

Bag of words

In information retrieval and other so-called machine-reading applications (such as text indexing for web search engines) the term "bag of words" is frequently used to underscore how in the course of processing a text the original order of the words in sentence form is stripped away.

need example / figure

Data structures such as word histograms or weighted term vectors are a standard part of the data engineer's toolkit. Why this transformation? The utility of "bag of words" is in how it makes text amenable to code, first in that it's straightforward to implement the translation from an initial document to a bag of words representation, and secondly, and more significantly, that this transformation then opens up a wide collection of tools and techniques for further transformation and analysis purposes. For instance, a number of libraries available in the booming field of "data sciences" work with "high dimension" vectors; bag of words is a way to transform a written document into a mathematical vector where each "dimension" corresponds to a unique word. While physically unimaginable and almost comically abstract (Shakespeare's Macbeth is now a point in space with 14 million dimensions), from a formal mathematical perspective, it's quite a comfortable idea, and many complementary techniques (such as principle component analysis) exist to reduce the apparent resulting complexity.

While "bag of words" might well serve as a cautionary reminder to programmers of the essential violence perpetrated to a text and a call to critically question the efficacy of methods based on subsequent transformations, the expressions use seems in practice more like a badge of pride or a schoolyard taunt that would go: Hey language: you're nothing but a big BAG-OF-WORDS. Following this spirit of the term, "bag of words" celebrates a perfunctory step of "breaking" a text into a purer form amenable to computation, to stripping language of its silly redundant repetitions and foolishly contrived stylistic phrasings to reveal a purer inner essence.

Book of words

Lieber's Standard Telegraphic Code, first published in 1896 and republished in various updated editions through the early 1900s, is an example of one of several competing systems of telegraph code books. The idea was for both senders and receivers of telegraph messages to use the books to translate their messages into a sequence of code words which can then be sent more cheaply (as telegraph messages were paid by the word). In the front of the book, a list of examples gives a sampling of how messages like: "Have bought for your account 400 bales of cotton, March delivery, at 8.34" can be conveyes by simply sending a telegram with the message "Ciotola, Delaboravi". In each case the reduction of number of words from original message to code is highlighted to underscore the astounding efficacy of the method. Like a dictionary or thesaurus, the book is primarily organized around key words, such as "act", "advice", "affairs", "bags", "bail", and "bales", under which exhaustive lists of useful phrases involving the corresponding word are provided in the main pages of the volume. [1]

[...] my focus in this chapter is on the inscription technology that grew parasitically alongside the monopolistic pricing strategies of telegraph companies: telegraph code books. Constructed under the bywords “economy,” “secrecy,” and “simplicity,” telegraph code books matched phrases and words with code letters or numbers. The idea was to use a single code word instead of an entire phrase, thus saving money by serving as an information compression technology. Generally economy won out over secrecy, but in specialized cases, secrecy was also important. [2]

In Katherine Hayles chapter devoted to telegraph code books she makes the observation how:

The interaction between code and language shows a steady movement away from a human-centric view of code toward a machine-centric view, thus anticipating the development of full-fledged machine codes with the digital computer. [3]

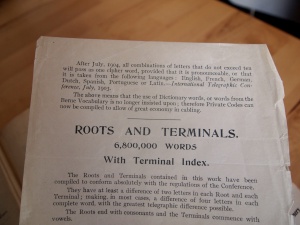

After July, 1904, all combinations of letters that do not exceed ten will pass as one cipher word, provided that it is pronounceable, or that it is taken from the following languages: English, French, German, Dutch, Spanish, Portuguese or Latin -- International Telegraphic Conference, July 1903 [4]

In Lieber's code, a page has been inserted describing how the code system conforms to latest international Telegraphic conventions: the stipulation that code words be actual words drawn from a variety of European languages (many of Lieber's code words are indeed Dutch, German, and Spanish words) underscores this particular moment of transition as the "human" body in the form of "pronounceable" speech confronts the potential for arbitrariness of a digital representation.

What telegraph code books do is remind us of is the relation of language in general to economy. Whether they may be economies of memory, attention, costs paid to a telecommunicatons company, or in terms of computer processing time or storage space, encoding knowledge is a form of shorthand and always involves an interplay with what we then expect to perform or "get out" of the resulting encoding.

Along with the invention of telegraphic codes comes a paradox that John Guillory has noted: code can be used both to clarify and occlude. Among the sedimented structures in the technological unconscious is the dream of a universal language. Uniting the world in networks of communication that flashed faster than ever before, telegraphy was particularly suited to the idea that intercultural communication could become almost effortless. In this utopian vision, the effects of continuous reciprocal causality expand to global proportions capable of radically transforming the conditions of human life. That these dreams were never realized seems, in retrospect, inevitable. [5]

Far from providing a universal system of encoding English language, Lieber's code is quite specifically designed for the particular needs and conditions of its use. In addition to he phrases ordered by keywords, the book includes a number of tables of terms for specialized use. For instance, one table lists a set of words used to describe all possible permutations of numeric grades of coffee (Choliam = 3,4, Choliambos = 3,4,5, Choliba = 4,5, etc.); another table lists pairs of code words to express the respective daily rise or fall of the price of coffee at the port of Le Havre in increments of a quarter of a Franc per 50 kilos ("Chirriado = prices have advanced 1 1/4 francs"). From an archaeological perspective, the Lieber's code book is a kind of cross section of early 20th century business communication between the United States and its trading partners.

The advertisements lining the book give a rich accounting of the situation of the code's use (and the commercial use of telegraphy in general). Among the advertisements for banking services, law offices, alcohol, and office equipment are several ads for gun powder and explosives, drilling equipment and metallurgic services specific to mining. Complementing telegraphies role in communicating with and between ships for safety reasons, commercial telegraphy provided a means of coordinating the "raw materials" being mined, grown, or otherwise extracted from overseas sources and shipped back for sale.

"Raw data now!"

Tim Berners-Lee: [...] Make a beautiful website, but first give us the unadulterated data, we want the data. We want unadulterated data. OK, we have to ask for raw data now. And I'm going to ask you to practice that, OK? Can you say "raw"? Audience: Raw. Tim Berners-Lee: Can you say "data"? Audience: Data. TBL: Can you say "now"? Audience: Now! TBL: Alright, "raw data now"! [...] So, we're at the stage now where we have to do this -- the people who think it's a great idea. And all the people -- and I think there's a lot of people at TED who do things because -- even though there's not an immediate return on the investment because it will only really pay off when everybody else has done it -- they'll do it because they're the sort of person who just does things which would be good if everybody else did them. OK, so it's called linked data. I want you to make it. I want you to demand it.[6]

Un/Structured

The World Wide Web provides a vast source of information of almost all types, ranging from DNA databases to resumes to lists of favorite restaurants. However, this information is often scattered among many web servers and hosts, using many different formats. If these chunks of information could be extracted from the World Wide Web and integrated into a structured form, they would form an unprecedented source of information. It would include the largest international directory of people, the largest and most diverse databases of products, the greatest bibliography of academic works, and many other useful resources. [...]

2.1 The Problem

Here we define our problem more formally:

Let D be a large database of unstructured information such as the World Wide Web [...] [7]

A traditional algorithm could not compute the large itemsets in the lifetime of the universe. [...] Yet many data sets are difficult to mine because they have many frequently occurring items, complex relationships between the items, and a large number of items per basket. In this paper we experiment with word usage in documents on the World Wide Web (see Section 4.2 for details about this data set). This data set is fundamentally different from a supermarket data set. Each document has roughly 150 distinct words on average, as compared to roughly 10 items for cash register transactions. We restrict ourselves to a subset of about 24 million documents from the web. This set of documents contains over 14 million distinct words, with tens of thousands of them occurring above a reasonable support threshold. Very many sets of these words are highly correlated and occur often.

Un/Ordered

In programming, I've encountered a recurring "problem" that's quite symptomatic. It goes something like this: you (the programmer) have managed to cobble out a lovely "content management system" (either from scratch, or using one of dozens of popular framewords) where author(s) (the client) can enter some "items" (for instance bookmarks) into a database. After this ordered items are automatically presented in list form (say on a starting page). The author: It's great, except... could this bookmark come before that one? The problem stems from the fact that the database ordering (a core functionality provided by any database) somehow applies a sorting logic that's almost but not quite right. A typical example is the sorting of names where details (where to place a name that starts with a Norwegian "Ø" for instance), are language-specific, and when a mixture of languages occurs, no single ordering is necessarily "correct". The (often) exascerbated programmer hastily adds an additional widget so that each item can also have an "order" (perhaps in the form of a date, or just some kind of (alpha)numerical "sorting" value) to be used to correctly order the resulting list. Now the author has a means, awkward and indirect but workable, to control the order of the presented data on the start page. But one might well ask, why not just edit the resulting listing as a document? This problem, in this and many variants, is widespread and reveals an essential backwardness that a particular "computer scientist" mindset relating to what constitutes "data" and in particular it's relationship to order that makes what might be a straightforward question of editing a document into an over-engineered database.

Recently working with Nikolaos Vogiatzis whose research explores playful and radically subjective alternatives to the list, Vogiatzis was struck by how from the earliest specifications of HTML (still valid today) have separate elements (OL and UL) for "ordered" and "unordered" lists.

The representation of the list is not defined here, but a bulleted list for unordered lists, and a sequence of numbered paragraphs for an ordered list would be quite appropriate. Other possibilities for interactive display include embedded scrollable browse panels.

List elements with typical rendering are:

UL A list of multi-line paragraphs, typically separated by some white space and/or marked by bullets, etc. OL As UL, but the paragraphs are typically numbered in some way to indicate the order as significant.

Vogiatzis surprise lay in the idea of a list ever being considered "unordered" (or in opposition to the language used in the specification, for order to ever be considered "insignificant"). Indeed in it's suggested representation, still followed in a modern web browser, the only difference between the two visually is that UL list item are preceded by a bullet symbol, while OL items are numbered.

(Separation of content and representation)

The idea of ordering runs deep in programming practice where essentially different data structures are employed depending on whether order is to be maintained. Many common data structures (the hash table or associative array for instance) are structured to offer other kinds of efficiencies (fast text-based retrieval for instance), at the expense of losing any original sequence (the keys of a hash table are ordered in an unpredictable way governed by the needs of that representation's particular implementation).

Data mining

bags of words, bales of cotton, barrels of oil

In announcing Google's impending data center in Mons, Belgian prime minister Di Rupo made the link between the history of the mining industry in the region and the present and future interest in "data mining" as practiced by Google. Apart from a metaphorical linkage, what in fact perhaps better links these different notions of "mining" is the way in which the notion of "raw material" obscures the hidden labor and power differentials employed to secure them.

"Raw" is always relative: "purity" depends on processes of "refinement" that typically carry heavy social / ecological impact.

There's a parallel between the "disembodiment" implied in stripping language of its essential order (and thus situated it in the act of reading or writing) and a colonial logic of profit from the trade in resources produced by undervalued labor and practices with devastating ecological impact.

There's a parallel in a shifting of responsibilities away from the individual / political related to the "human scale" and the obscured responsibilities and seemingly inevitable forces of the "machine scale", be it the machine of market or the machine of algorithm.

Parallel shifts, telegraph and telephony a shift occurs from language as something performed by a human body, to becoming captured in code, and occurring at a machine scale. In document processing a similar shift occurs from language as writing to language as symbolic sets of information to be treated to statistical methods for extracting knowledge in the form of relationships.

Often when we speak of machine or computer in terms like machine reading or computer vision, we speak of a displacement of human labour ... often to apply condensed human labour typically embodied in the form of (trained) statistic models ... and a displacement of responsibility as values become encoded in the form of algorithms/software.

The interest in "machinic" (minimal human intervention) involves on first glance "machinic" in the traditional sense of automating labour, replacing the human work of categorizing with an automated process; in this way opening up the process to a larger quantity of pages and a range of "esoteric" topics which would not be possible to handle with traditional editorial processes. This "machinic" shift is a business model that learns to extract the value of web surfers behaviour; this process is then echoed in google's book digitization which similarly "extracts" / exploits the value of the collection librarian (on top of the work of the author, the typesetter, the publisher)

The computer scientists view of textual content as "unstructured", be it in a webpage or the pages of a scanned text, underscore / reflect the negligence to the processes and labor of writing, editing, design, layout, typesetting, and eventually publishing, collecting and cataloging [10].

In other words, by "unstructured" it is meant: unstructured in relation to the machine -- that is, not explicitly structured in a format directly amenable to use by automated means. "Structuring" then is a process by which structure is made explicit through the use of standards of markup (such as HTML/XML). In this way, the computer scientist is viewing a text through the eyes of their reading algorithm, and in the process (voluntarily) blinding themselves to the work practices which have produced, and maintain, the given textual resources, choosing to view them as instead somehow "freely given" and available to exploit as a "raw material".- ↑ Benjamin Franklin Lieber, Lieber's Standard Telegraphic Code, 1896, New York; https://archive.org/details/standardtelegrap00liebuoft

- ↑ Katherine Hayles, "Technogenesis in Action: Telegraph Code Books and the Place of the Human", How We Think: Digital Media and Contemporary Technogenesis, 2006

- ↑ Hayles

- ↑ Lieber's

- ↑ Hayles

- ↑ Tim Berners-Lee: The next web, TED Talk, February 2009 http://www.ted.com/talks/tim_berners_lee_on_the_next_web/transcript?language=en

- ↑ Extracting Patterns and Relations from the World Wide Web, Sergey Brin, Proceedings of the WebDB Workshop at EDBT 1998, http://www-db.stanford.edu/~sergey/extract.ps

- ↑ Dynamic Data Mining: Exploring Large Rule Spaces by Sampling; Sergey Brin and Lawrence Page, 1998; p. 2 http://ilpubs.stanford.edu:8090/424/

- ↑ Hypertext Markup Language (HTML): "Internet Draft", Tim Berners-Lee and Daniel Connolly, June 1993, http://www.w3.org/MarkUp/draft-ietf-iiir-html-01.txt

- ↑ http://informationobservatory.info/2015/10/27/google-books-fair-use-or-anti-democratic-preemption/#more-279